Hi All,

I'd like to shed some light on how Hugepages works and its relation to SGA in order to eliminate some confusion you might have.

1- These 2 are not related, no need for huegapgaes to be set, but it's advisable to set it for better memory utilization.

2- If hugepages is set, then the value is affected by the SGA size

3- If SGA is changing(increase/decrease) , hugepages must be recalculated accordingly.

Let me illustrate it:

_*Case 1 -- Hugepages not set*_

========================

* cat /proc/meminfo and if HugePages_Total = 0 , this indicates, that the hugepages not set

* Suppose we want to set the hugepages for 5GB SGA, do the following:

o 1st we must convert the 5GB to MB, so 5*1024 which equals to 5120MB

o Divide the above value by 2, so 5120/2 = 2560

o Add 3% (for over head)to the above value, so 2560+85(3%)= 2645 which is value of hugepages

o Set vm.nr_hugepages = 2645 in /etc/sysctl.conf

_*Case 2 - SGA size changed*_

======================

* If the SGA is changed(buffer cache or shared_pool), then we need to adjust the hugepages accordingly as follows:

* Suppose we're increasing the SGA by 2GB (from 5GB to 7GB), so we must increase the hugepages by 2 GB or set it to 7GB if it's not set; let's assume that the current value is set correctly and we just need to increase it by 2 GB; then we do:

o Convert the 2GB to MB, so 2*1024 which equals to 2048MB

o Divide the above value by 2, so 2048/2 = 1024 and add 3%

o Add the above value to he current hugepages value, so 2645+1054= 3799 which is the new value of hugepages

o Set vm.nr_hugepages = 3749 in /etc/sysctl.conf

And remember that you must always check the value of kernel.shmmax & kernel.shmall in /etc/sysctl.conf

Pls refer to the attached table below on how to set the value for these parameter:

HAPPY LEARNING!

Saturday, October 9, 2010

How To Prevent Inactive JDBC Connections In Oracle Applications

Applies to:

=======

Oracle Application Object Library - Version: 11.5.9 to 11.5.10.2

This problem can occur on any platform.

Symptoms

=======

Many Inactive JDBC connections causing performance issues in the database and in framework pages

Cause

=====

There are many reasons for the inactive JDBC sessions to happen. Following are the brief details how the JDBC connections are established and are maintained in pool.

In E-Business suite environment, the JDBC connections are established with the server when there is a database connection request comes from the client.

In Oracle applications we use the JDBC thin driver out of various database connection drivers.

The dbc file present under $FND_TOP/secure directory contains various parameters which are responsible for the connection to the database upon receiving a request from Apache Jserv.The following are the important parameters in the dbc file :

FND_MAX_JDBC_CONNECTIONS=100

FND_JDBC_BUFFER_MIN=5

FND_JDBC_BUFFER_MAX=5

FND_JDBC_BUFFER_DECAY_INTERVAL=60

FND_JDBC_BUFFER_DECAY_SIZE=1

FND_JDBC_USABLE_CHECK=true

FND_JDBC_CONTEXT_CHECK=true

FND_JDBC_PLSQL_RESET=false

The AOLJ Database connection pool is intended to have a farm of open JDBC connections to the database which can be borrowed by the java code running in the OACoreGroup for a short time. Performance wise this is more efficient since it saves opening and closing of a JDBC connection each time. This however means that a connection can be idle for quite a long time when there is little activity in the system.

Note that each JVM has it's own connection pool. So, if there are 2 JVMs running for OACore, then there are also 2 connection pools.

This is important since it also means that the max number of JDBC connections in this case is 2 x FND_MAX_JDBC_CONNECTIONS.

Specially in large environments with multiple MT servers and multiple JVM's the total number of connection could become too large.

Unfortunately there is no mechanism implemented in the connection pool which performs some kind of 'heartbeat' (like we have in Forms) for idle connections.

Also there is no mechanism in the Connection pool to determine whether the JDBC connection to the database has been dropped.

So the JDBC connection in the pool still seems to be valid until some code borrows it and then finds out that the connection has been dropped.

We can drop all the INACTIVE connections at once. Later, when high number of new requests for JDBC connections are received then a lot of new connections have to be created which does not benefit system performance. The JDBC connection pool, neither knows nor cares whether a given user is still logged in. It only cares how many different user sessions need access to the database right now.

Solution

======

Following proactive checks can be done to prevent high number of inactive JDBC connections :

1. Check the for the JDK version being used it the instance. From JDK 1.4.2 onwards as a thumb rule it is suggested to use 1 JVM per CPU for 100 active connected users to OACoreGroup.

Use the script to determine "active users" for OACoreGroup :

REM

REM SQL to count number of Apps 11i users

REM Run as APPS user

REM

select 'Number of user sessions : '

count( distinct session_id) How_many_user_sessions from icx_sessions icx where disabled_flag != 'Y'

and PSEUDO_FLAG = 'N'

and (last_connect + decode(FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT'), NULL,limit_time, 0,limit_time,FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT')/60)/24) > sysdate and counter < limit_connects;

REM

REM END OF SQL

REM

Note 362851.1 : Guidelines to setup the JVM in Apps Ebusiness Suite 11i and R12

2. For application version 11.5.10 onwards ensure ATG_PF.H is applied onto the instance. Also ensure that you are using the latest version of the JDBC driver. You may run the following sql to get the current JDBC driver version in the system :

select bug_number, decode(bug_number,

'3043762','JDBC drivers 8.1.7.3',

'2969248','JDBC drivers 9.2.0.2',

'3080729','JDBC drivers 9.2.0.4 (OCT-2003)',

'3423613','JDBC drivers 9.2.0.4 (MAR-2004)',

'3585217','JDBC drivers 9.2.0.4 (MAY-2004)',

'3882116','JDBC drivers 9.2.0.5 (OCT-2004)',

'3966003','JDBC drivers 9.2.0.5 (OCT-2004)',

'3981178','JDBC drivers 9.2.0.5 (NOV-2004)',

'4090504','JDBC drivers 9.2.0.5 (JAN-2005)',

'4201222','JDBC drivers 9.2.0.6 (MAY-2005)') Patch_description

from ad_bugs

where bug_number in

('3043762',

'2969248',

'3080729',

'3423613',

'3585217',

'3882116',

'3966003',

'3981178',

'4090504',

'4201222'

) order by 2;

3. Ensure that you have all the database initialization parameters set and all the recommended database performance patches applied on the instance as per the following notes :

Note 216205.1 : Database Initialization Parameters for Oracle Applications 11i

Note 396009.1 : Database Initialization Parameters for Oracle Applications Release 12

Note 244040.1 : Oracle E-Business Suite Recommended Performance Patches

4. Implement a strategy to minimize the JDBC connections

If JDBC connections are being retained in the pool then this will ensure connections are dropped as soon as the application has finished with it.

Apart from ensuring minimum DB connections it will also help to identify if there is a JDBC connection leak

a) De-tune JDBC connection pool

Do through Autoconfig (or manually update DBC file) on all Middle Tier servers

FND_JDBC_BUFFER_DECAY_INTERVAL=120

FND_JDBC_BUFFER_MIN=0

FND_JDBC_BUFFER_MAX=0

FND_MAX_JDBC_CONNECTIONS=256

FND_JDBC_USABLE_CHECK=true

FND_JDBC_BUFFER_DECAY_SIZE=5

Note : "FND_JDBC_USABLE_CHECK=true" is preferred for RAC, as discussed in note

Note 278868.1 : AOL/J JDBC Connection Pool White Paper

Note 294652.1 : E-Business Suite 11i on RAC : Configuring Database Load balancing & Failover

5. Ensure that the current TCP settings are set as recommended by Oracles tcpset.sh script and also from OS vendor web site

Parameter Recommended value

tcp_ip_abort_interval 60,000

tcp_keepalive_interval 900,000

tcp_rexmit_interval_initial 1500

6. Check the jserv.properties "security" settings

Ensure that the current setting does not allow more connections to the JVM than JDBC connections, which is not best practice.

Changing security.backlog, in particular, can lead to user connections hanging.

Maintain default settings

security.maxConnections=256

# security.backlog=5

7. Check ApJServRetryAttempts parameter in Jserv.conf

This setting will delay any recovery of a dead JVM by mod_oprocmgr. If a JVM is not responding in 45 minutes (default setting) then tuning should be implemented to resolve this, rather than allowing 45 minutes of no response.

Maintain default settings

ApJServRetryAttempts 3

8. Disable JVM Distributed Caching

If some JVMs out of many are not servicing requests and generating "java.lang.NoClassDefFoundError" errors. Disabling Distributed JVM caching would eliminate this cause of the problem.

Disabling Distributed JVM Cache is achieved by changing "LONG_RUNNING_JVM=" from "true" to "false" in the jserv.properties. This is controlled by AutoConfig parameter "s_long_running_jvm"

9. Prevent wastage of database connections on the system by setting the profile option

'FND: Application Module Pool Minimum Available Size' value to 0 (which is the default).

After doing the above monitor the progress in terms of connection utilization. Also keep collecting the following information from system periodically:

column module heading "Module Name" format a48;

column machine heading "Machine Name" format a15;

column process heading "Process ID" format a10;

column inst_id heading "Instance ID" format 99;

prompt

prompt Connection Usage Per Module and process

select to_char(sysdate, 'dd-mon-yyyy hh24:mi') Time from dual

/

prompt ~~~~

select count(*), machine, process, module from v$session

where program like 'JDBC%' group by machine, process, module order by 1 asc

/

10. The following sqls can be useful

+ To find total number of open database connections for a given JVM PID

SELECT s.process, Count(*) all_count FROM v$session s WHERE s.process IN () GROUP BY s.process

+ To find number of database connections per JVM that were inactive for longer then 30 minutes

SELECT s.process, Count(*) olderConnection_count FROM v$session s WHERE s.process IN ()

and s.last_call_et>=(30*60) and s.status='INACTIVE' GROUP BY s.process

+ To find the modules responsible to JDBC connections for a process id

SELECT Count(*), process,machine, program, MODULE FROM v$session s

WHERE s.process IN ('&id')GROUP BY process,machine, program, MODULE ORDER BY process,machine, program, MODULE;

11. For processes that show the highest counts in query result above, Log in to the JVM machine and run

"kill -3 jvmid".

where jvmid is the process seen in query output above.

The output goes to OACoreGroup.*.stdout file. Post those files to Oracle Support for reveiw.

12. If there seems to be a lot of blocked sessions in the database. Check 2 or 3 locked/blocked sessions to ascertain the user, sql and row locked.

You can use utllockt.sql ( Note 166534.1 : Resolving locking issues using utllockt.sql script ) as the starting point. Post the output from utllockt.sql in addition to the SQL / row lock information for 2 or 3 sessions to Oracle Support.

References

========

Note 164317.1 - Upgrading JDBC drivers with Oracle Applications 11i

Note 166534.1 - Resolving locking issues using utllockt.sql script

Note 216205.1 - Database Initialization Parameters for Oracle Applications Release 11i

Note 244040.1 - Oracle E-Business Suite Recommended Performance Patches

Note 278868.1 - AOL/J JDBC Connection Pool White Paper

Note 294652.1 - E-Business Suite 11i on RAC : Configuring Database Load balancing & Failover

HAPPY LEARNING!

=======

Oracle Application Object Library - Version: 11.5.9 to 11.5.10.2

This problem can occur on any platform.

Symptoms

=======

Many Inactive JDBC connections causing performance issues in the database and in framework pages

Cause

=====

There are many reasons for the inactive JDBC sessions to happen. Following are the brief details how the JDBC connections are established and are maintained in pool.

In E-Business suite environment, the JDBC connections are established with the server when there is a database connection request comes from the client.

In Oracle applications we use the JDBC thin driver out of various database connection drivers.

The dbc file present under $FND_TOP/secure directory contains various parameters which are responsible for the connection to the database upon receiving a request from Apache Jserv.The following are the important parameters in the dbc file :

FND_MAX_JDBC_CONNECTIONS=100

FND_JDBC_BUFFER_MIN=5

FND_JDBC_BUFFER_MAX=5

FND_JDBC_BUFFER_DECAY_INTERVAL=60

FND_JDBC_BUFFER_DECAY_SIZE=1

FND_JDBC_USABLE_CHECK=true

FND_JDBC_CONTEXT_CHECK=true

FND_JDBC_PLSQL_RESET=false

The AOLJ Database connection pool is intended to have a farm of open JDBC connections to the database which can be borrowed by the java code running in the OACoreGroup for a short time. Performance wise this is more efficient since it saves opening and closing of a JDBC connection each time. This however means that a connection can be idle for quite a long time when there is little activity in the system.

Note that each JVM has it's own connection pool. So, if there are 2 JVMs running for OACore, then there are also 2 connection pools.

This is important since it also means that the max number of JDBC connections in this case is 2 x FND_MAX_JDBC_CONNECTIONS.

Specially in large environments with multiple MT servers and multiple JVM's the total number of connection could become too large.

Unfortunately there is no mechanism implemented in the connection pool which performs some kind of 'heartbeat' (like we have in Forms) for idle connections.

Also there is no mechanism in the Connection pool to determine whether the JDBC connection to the database has been dropped.

So the JDBC connection in the pool still seems to be valid until some code borrows it and then finds out that the connection has been dropped.

We can drop all the INACTIVE connections at once. Later, when high number of new requests for JDBC connections are received then a lot of new connections have to be created which does not benefit system performance. The JDBC connection pool, neither knows nor cares whether a given user is still logged in. It only cares how many different user sessions need access to the database right now.

Solution

======

Following proactive checks can be done to prevent high number of inactive JDBC connections :

1. Check the for the JDK version being used it the instance. From JDK 1.4.2 onwards as a thumb rule it is suggested to use 1 JVM per CPU for 100 active connected users to OACoreGroup.

Use the script to determine "active users" for OACoreGroup :

REM

REM SQL to count number of Apps 11i users

REM Run as APPS user

REM

select 'Number of user sessions : '

count( distinct session_id) How_many_user_sessions from icx_sessions icx where disabled_flag != 'Y'

and PSEUDO_FLAG = 'N'

and (last_connect + decode(FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT'), NULL,limit_time, 0,limit_time,FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT')/60)/24) > sysdate and counter < limit_connects;

REM

REM END OF SQL

REM

Note 362851.1 : Guidelines to setup the JVM in Apps Ebusiness Suite 11i and R12

2. For application version 11.5.10 onwards ensure ATG_PF.H is applied onto the instance. Also ensure that you are using the latest version of the JDBC driver. You may run the following sql to get the current JDBC driver version in the system :

select bug_number, decode(bug_number,

'3043762','JDBC drivers 8.1.7.3',

'2969248','JDBC drivers 9.2.0.2',

'3080729','JDBC drivers 9.2.0.4 (OCT-2003)',

'3423613','JDBC drivers 9.2.0.4 (MAR-2004)',

'3585217','JDBC drivers 9.2.0.4 (MAY-2004)',

'3882116','JDBC drivers 9.2.0.5 (OCT-2004)',

'3966003','JDBC drivers 9.2.0.5 (OCT-2004)',

'3981178','JDBC drivers 9.2.0.5 (NOV-2004)',

'4090504','JDBC drivers 9.2.0.5 (JAN-2005)',

'4201222','JDBC drivers 9.2.0.6 (MAY-2005)') Patch_description

from ad_bugs

where bug_number in

('3043762',

'2969248',

'3080729',

'3423613',

'3585217',

'3882116',

'3966003',

'3981178',

'4090504',

'4201222'

) order by 2;

3. Ensure that you have all the database initialization parameters set and all the recommended database performance patches applied on the instance as per the following notes :

Note 216205.1 : Database Initialization Parameters for Oracle Applications 11i

Note 396009.1 : Database Initialization Parameters for Oracle Applications Release 12

Note 244040.1 : Oracle E-Business Suite Recommended Performance Patches

4. Implement a strategy to minimize the JDBC connections

If JDBC connections are being retained in the pool then this will ensure connections are dropped as soon as the application has finished with it.

Apart from ensuring minimum DB connections it will also help to identify if there is a JDBC connection leak

a) De-tune JDBC connection pool

Do through Autoconfig (or manually update DBC file) on all Middle Tier servers

FND_JDBC_BUFFER_DECAY_INTERVAL=120

FND_JDBC_BUFFER_MIN=0

FND_JDBC_BUFFER_MAX=0

FND_MAX_JDBC_CONNECTIONS=256

FND_JDBC_USABLE_CHECK=true

FND_JDBC_BUFFER_DECAY_SIZE=5

Note : "FND_JDBC_USABLE_CHECK=true" is preferred for RAC, as discussed in note

Note 278868.1 : AOL/J JDBC Connection Pool White Paper

Note 294652.1 : E-Business Suite 11i on RAC : Configuring Database Load balancing & Failover

5. Ensure that the current TCP settings are set as recommended by Oracles tcpset.sh script and also from OS vendor web site

Parameter Recommended value

tcp_ip_abort_interval 60,000

tcp_keepalive_interval 900,000

tcp_rexmit_interval_initial 1500

6. Check the jserv.properties "security" settings

Ensure that the current setting does not allow more connections to the JVM than JDBC connections, which is not best practice.

Changing security.backlog, in particular, can lead to user connections hanging.

Maintain default settings

security.maxConnections=256

# security.backlog=5

7. Check ApJServRetryAttempts parameter in Jserv.conf

This setting will delay any recovery of a dead JVM by mod_oprocmgr. If a JVM is not responding in 45 minutes (default setting) then tuning should be implemented to resolve this, rather than allowing 45 minutes of no response.

Maintain default settings

ApJServRetryAttempts 3

8. Disable JVM Distributed Caching

If some JVMs out of many are not servicing requests and generating "java.lang.NoClassDefFoundError" errors. Disabling Distributed JVM caching would eliminate this cause of the problem.

Disabling Distributed JVM Cache is achieved by changing "LONG_RUNNING_JVM=" from "true" to "false" in the jserv.properties. This is controlled by AutoConfig parameter "s_long_running_jvm"

9. Prevent wastage of database connections on the system by setting the profile option

'FND: Application Module Pool Minimum Available Size' value to 0 (which is the default).

After doing the above monitor the progress in terms of connection utilization. Also keep collecting the following information from system periodically:

column module heading "Module Name" format a48;

column machine heading "Machine Name" format a15;

column process heading "Process ID" format a10;

column inst_id heading "Instance ID" format 99;

prompt

prompt Connection Usage Per Module and process

select to_char(sysdate, 'dd-mon-yyyy hh24:mi') Time from dual

/

prompt ~~~~

select count(*), machine, process, module from v$session

where program like 'JDBC%' group by machine, process, module order by 1 asc

/

10. The following sqls can be useful

+ To find total number of open database connections for a given JVM PID

SELECT s.process, Count(*) all_count FROM v$session s WHERE s.process IN (

+ To find number of database connections per JVM that were inactive for longer then 30 minutes

SELECT s.process, Count(*) olderConnection_count FROM v$session s WHERE s.process IN (

and s.last_call_et>=(30*60) and s.status='INACTIVE' GROUP BY s.process

+ To find the modules responsible to JDBC connections for a process id

SELECT Count(*), process,machine, program, MODULE FROM v$session s

WHERE s.process IN ('&id')GROUP BY process,machine, program, MODULE ORDER BY process,machine, program, MODULE;

11. For processes that show the highest counts in query result above, Log in to the JVM machine and run

"kill -3 jvmid".

where jvmid is the process seen in query output above.

The output goes to OACoreGroup.*.stdout file. Post those files to Oracle Support for reveiw.

12. If there seems to be a lot of blocked sessions in the database. Check 2 or 3 locked/blocked sessions to ascertain the user, sql and row locked.

You can use utllockt.sql ( Note 166534.1 : Resolving locking issues using utllockt.sql script ) as the starting point. Post the output from utllockt.sql in addition to the SQL / row lock information for 2 or 3 sessions to Oracle Support.

References

========

Note 164317.1 - Upgrading JDBC drivers with Oracle Applications 11i

Note 166534.1 - Resolving locking issues using utllockt.sql script

Note 216205.1 - Database Initialization Parameters for Oracle Applications Release 11i

Note 244040.1 - Oracle E-Business Suite Recommended Performance Patches

Note 278868.1 - AOL/J JDBC Connection Pool White Paper

Note 294652.1 - E-Business Suite 11i on RAC : Configuring Database Load balancing & Failover

HAPPY LEARNING!

Friday, October 8, 2010

AWR- Wait Event Classes

Hi,

I have tried to classify the AWR wait events and the actions to be taken for each wait events. This chart should help in diagnosing the wait events shown in AWR reports.

To view the chart clearly, click on the chart it will be opened in a new page.

HAPPY LEARNING!

Saturday, October 2, 2010

Oracle Application Server FAQ

1. Which are the main 3 Oracle e-business platform components ?

2. What is the Oracle Application Server ?

3. Which are the key components of Oracle Application Server (OAS) ?

4. Which are the key components of the OAS Identity Management ?

5. Which are the 2 main components of Oracle Application Server ?

6. Which are the Middle-Tier components ?

7. Which are the main components of OracleAS Infrastructure ?

8. Which are the 3 categories of Metadata Repository ?

1. Which are the main 3 Oracle e-business platform components ?

• Oracle Application Server: to help deploy Internet applications

• Oracle Database Server: to store enterprise business data

• Oracle Developer Suite: to develop Internet applications

2. What is the Oracle Application Server ?

The Oracle Application Server is an Integrated Software Infrastructure for Enterprise Applications used to deploy Internet applications but also to create and manage enterprise portals and mobile devices, to automate business process and to provide real-time business intelligence.

3. Which are the key components of Oracle Application Server (OAS) ?

• OC4J ( Oracle Application Server Containers for J2EE)

• Oracle HTTP Server

• Oracle Internet Directory

• Oracle AS Web Cache

• Oracle AS Portal

• Oracle AS Wireless

• Oracle Identity Management (in addition to OID, it includes the Oracle SSO and Oracle CA)

• Management, Integration and Security Components (Oracle Application Server Control, Oracle Internet Directory )

• Oracle Business Intelligence (Oracle AS Discoverer)

4. Which are the key components of the OAS Identity Management ?

• Oracle Internet Directory (OID) = single store for all type of user information

• Oracle Delegated Administrative Services (DAS) = manage the OID

• Oracle Directory Integration and Provisioning. Directory Integration refers to the synchronization of the OID with other irectories and user repositories. Directory Provisioning refers to the Oracle AS 10g feature that enable the creation and management of users' accounts and privileges for various Oracle components and applications

• OracleAS Single Sign-On (SSO) = provide the transparent logon to all SSO-enabled applications on all application servers in an Oracle AS farm

• OracleAS Certificate Authority (CA) = manage the public-key certificates.

5. Which are the 2 main components of Oracle Application Server ?

• Oracle AS Infrastructure = supports the functioning of the Oracle AS middle-tier components

• Oracle AS Middle-Tier = all the applications are deployed and run from middle-tier.

6. Which are the Middle-Tier components ?

• Oracle HTTP Server

• Oracle Containers for J2EE (OC4J)

• Oracle AS Portal

• Oracle AS Web Cache

7. Which are the main component of OracleAS Infrastructure ?

• OracleAS Metadata Repository = a part of an Oracle Database which store information for various Oracle AS components;

• Oracle Identity Managemant

8. Which are the 3 categories of Metadata Repository ?

• Product metadata = used by products like Oracle AS Portal or Oracle AS Wireless

• Identity Management metadata = used by OID, SSO, CA

• Configuration Management metadata

Oracle AS 10g let you install the Oracle AS Metadata Repository into an existing Oracle database; otherwise, it creates a new Oracle Database 10g for this purpose.

HAPPY LEARNING !

2. What is the Oracle Application Server ?

3. Which are the key components of Oracle Application Server (OAS) ?

4. Which are the key components of the OAS Identity Management ?

5. Which are the 2 main components of Oracle Application Server ?

6. Which are the Middle-Tier components ?

7. Which are the main components of OracleAS Infrastructure ?

8. Which are the 3 categories of Metadata Repository ?

1. Which are the main 3 Oracle e-business platform components ?

• Oracle Application Server: to help deploy Internet applications

• Oracle Database Server: to store enterprise business data

• Oracle Developer Suite: to develop Internet applications

2. What is the Oracle Application Server ?

The Oracle Application Server is an Integrated Software Infrastructure for Enterprise Applications used to deploy Internet applications but also to create and manage enterprise portals and mobile devices, to automate business process and to provide real-time business intelligence.

3. Which are the key components of Oracle Application Server (OAS) ?

• OC4J ( Oracle Application Server Containers for J2EE)

• Oracle HTTP Server

• Oracle Internet Directory

• Oracle AS Web Cache

• Oracle AS Portal

• Oracle AS Wireless

• Oracle Identity Management (in addition to OID, it includes the Oracle SSO and Oracle CA)

• Management, Integration and Security Components (Oracle Application Server Control, Oracle Internet Directory )

• Oracle Business Intelligence (Oracle AS Discoverer)

4. Which are the key components of the OAS Identity Management ?

• Oracle Internet Directory (OID) = single store for all type of user information

• Oracle Delegated Administrative Services (DAS) = manage the OID

• Oracle Directory Integration and Provisioning. Directory Integration refers to the synchronization of the OID with other irectories and user repositories. Directory Provisioning refers to the Oracle AS 10g feature that enable the creation and management of users' accounts and privileges for various Oracle components and applications

• OracleAS Single Sign-On (SSO) = provide the transparent logon to all SSO-enabled applications on all application servers in an Oracle AS farm

• OracleAS Certificate Authority (CA) = manage the public-key certificates.

5. Which are the 2 main components of Oracle Application Server ?

• Oracle AS Infrastructure = supports the functioning of the Oracle AS middle-tier components

• Oracle AS Middle-Tier = all the applications are deployed and run from middle-tier.

6. Which are the Middle-Tier components ?

• Oracle HTTP Server

• Oracle Containers for J2EE (OC4J)

• Oracle AS Portal

• Oracle AS Web Cache

7. Which are the main component of OracleAS Infrastructure ?

• OracleAS Metadata Repository = a part of an Oracle Database which store information for various Oracle AS components;

• Oracle Identity Managemant

8. Which are the 3 categories of Metadata Repository ?

• Product metadata = used by products like Oracle AS Portal or Oracle AS Wireless

• Identity Management metadata = used by OID, SSO, CA

• Configuration Management metadata

Oracle AS 10g let you install the Oracle AS Metadata Repository into an existing Oracle database; otherwise, it creates a new Oracle Database 10g for this purpose.

HAPPY LEARNING !

Friday, October 1, 2010

Configuring Middle-Tier JVMs for Applications 11i

When you call Oracle Support with a problem like apj12 errors in your mod_jserv.log, middle-tier Java Virtual Machines (JVMs) crashing, or poor middle-tier performance, then it will often be suggested to increase the number of JVM processes. So, the key question that likely occurs to you is, "How many JVMs are required for my system?"

Processing Java Traffic in Groups

First, some quick background: web requests received by Oracle HTTP Server (Apache) to process Java code is sent to one of four different types of JVM groups to be processed. You can see this in the jserv.conf file:

ApJServGroup OACoreGroup 2 1 /usr/.../jserv.properties

ApJServGroup DiscoGroup 1 1 /usr/.../viewer4i.properties

ApJServGroup FormsGroup 1 1 /usr/.../forms.properties

ApJServGroup XmlSvcsGrp 1 1 /usr/.../xmlsvcs.properties

The number of JVMs for each group is signified by the first number on each line.

OACoreGroup is the default group. This is where most Java requests will be serviced

DiscoGroup is only used for Discoverer 4i requests

FormsGroup is only used for Forms Servlet requests

XmlSvcsGrp is for XML Gateway, Web Services, and SOAP requests

In the example above, I have two JVMs configured for OACoreGroup and one JVM configured for each of the other groups.

Factors Affecting Number of JVMs Required

Determining how many JVMs to configure is a complex approximation, as many factors need to be taken into account. These include:

Hardware specification and current utilization levels

Operating system patches and kernel settings

JDK version and tuning

Applications code versions, especially JDBC and oJSP

JServ configuration file tuning (jserv.properties and zone.properties)

Applications modules being used

How many active users

User behaviour

Rough Guidelines for JVMs

Luckily, Oracle Development have undertaken various performance tests to establish some rules of thumb that can be used to configure the initial number of JVMs for your system.

OACoreGroup

1 JVM per 100 active users

DiscoGroup

Use the capacity planning guide from Note 236124.1 "Oracle 9iAS 1.0.2.2 Discoverer 4i: A Capacity Planning Guide"

FormsGroup

1 JVM per 50 active forms users

XmlSvcsGrp

1 JVM is generally sufficient

In addition to this, Oracle generally recommends no more than 2 JVMs per CPU. You also need to confirm there are enough operating system resources (e.g. physical memory) to cope with any additional JVMs.

Your Mileage Will Vary

The general guidelines above are just that -- they're very broad estimates, and your mileage will vary. As I write this, the Applications Technology Group is working on a JVM Sizing whitepaper that will provide guidelines based on whether your E-Business Suite deployment is small, medium, or large. I'll profile this whitepaper here as soon as it's released publicly.

Until then, it's critical that you test your environment under load, using transactional tests that closely mirror what your users will be doing. It's useful to use automated testing tools for this, as you create your benchmarks.

Here are a couple of quick-and-dirty tools that might be useful in sizing your JVMs.

Script to determine "active users" for OACoreGroup

REM

REM SQL to count number of Apps 11i users

REM Run as APPS user

REM

select 'Number of user sessions : '

count( distinct session_id) How_many_user_sessions

from icx_sessions icx

where disabled_flag != 'Y'

and PSEUDO_FLAG = 'N'

and (last_connect + decode(FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT'), NULL,limit_time, 0,limit_time,FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT')/60)/24) > sysdate

and counter < limit_connects;

REM

REM END OF SQL

REM

How to determine "active forms users" for FormsGroup

Check the number of f60webmx processes on the Middle Tier server. For example:

ps -ef

grep f60webx

wc -l

Conclusion

The number of required JVMs is extremely site-specific and can be complex to predict

Use the rules of thumb as a starting point, but benchmark your environment carefully to see if they're adequate

Proactively monitor your environment to determine the efficiency of the existing settings and reevaluate if required

More on Java Memory Tuning Later

Once you've established the right number of JVMs to use, it's then time to optimize them. I'm intending to discuss Java memory tuning and OutOfMemory issues in a future article. Stay tuned

HAPPY LEARNING!

Processing Java Traffic in Groups

First, some quick background: web requests received by Oracle HTTP Server (Apache) to process Java code is sent to one of four different types of JVM groups to be processed. You can see this in the jserv.conf file:

ApJServGroup OACoreGroup 2 1 /usr/.../jserv.properties

ApJServGroup DiscoGroup 1 1 /usr/.../viewer4i.properties

ApJServGroup FormsGroup 1 1 /usr/.../forms.properties

ApJServGroup XmlSvcsGrp 1 1 /usr/.../xmlsvcs.properties

The number of JVMs for each group is signified by the first number on each line.

OACoreGroup is the default group. This is where most Java requests will be serviced

DiscoGroup is only used for Discoverer 4i requests

FormsGroup is only used for Forms Servlet requests

XmlSvcsGrp is for XML Gateway, Web Services, and SOAP requests

In the example above, I have two JVMs configured for OACoreGroup and one JVM configured for each of the other groups.

Factors Affecting Number of JVMs Required

Determining how many JVMs to configure is a complex approximation, as many factors need to be taken into account. These include:

Hardware specification and current utilization levels

Operating system patches and kernel settings

JDK version and tuning

Applications code versions, especially JDBC and oJSP

JServ configuration file tuning (jserv.properties and zone.properties)

Applications modules being used

How many active users

User behaviour

Rough Guidelines for JVMs

Luckily, Oracle Development have undertaken various performance tests to establish some rules of thumb that can be used to configure the initial number of JVMs for your system.

OACoreGroup

1 JVM per 100 active users

DiscoGroup

Use the capacity planning guide from Note 236124.1 "Oracle 9iAS 1.0.2.2 Discoverer 4i: A Capacity Planning Guide"

FormsGroup

1 JVM per 50 active forms users

XmlSvcsGrp

1 JVM is generally sufficient

In addition to this, Oracle generally recommends no more than 2 JVMs per CPU. You also need to confirm there are enough operating system resources (e.g. physical memory) to cope with any additional JVMs.

Your Mileage Will Vary

The general guidelines above are just that -- they're very broad estimates, and your mileage will vary. As I write this, the Applications Technology Group is working on a JVM Sizing whitepaper that will provide guidelines based on whether your E-Business Suite deployment is small, medium, or large. I'll profile this whitepaper here as soon as it's released publicly.

Until then, it's critical that you test your environment under load, using transactional tests that closely mirror what your users will be doing. It's useful to use automated testing tools for this, as you create your benchmarks.

Here are a couple of quick-and-dirty tools that might be useful in sizing your JVMs.

Script to determine "active users" for OACoreGroup

REM

REM SQL to count number of Apps 11i users

REM Run as APPS user

REM

select 'Number of user sessions : '

count( distinct session_id) How_many_user_sessions

from icx_sessions icx

where disabled_flag != 'Y'

and PSEUDO_FLAG = 'N'

and (last_connect + decode(FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT'), NULL,limit_time, 0,limit_time,FND_PROFILE.VALUE('ICX_SESSION_TIMEOUT')/60)/24) > sysdate

and counter < limit_connects;

REM

REM END OF SQL

REM

How to determine "active forms users" for FormsGroup

Check the number of f60webmx processes on the Middle Tier server. For example:

ps -ef

grep f60webx

wc -l

Conclusion

The number of required JVMs is extremely site-specific and can be complex to predict

Use the rules of thumb as a starting point, but benchmark your environment carefully to see if they're adequate

Proactively monitor your environment to determine the efficiency of the existing settings and reevaluate if required

More on Java Memory Tuning Later

Once you've established the right number of JVMs to use, it's then time to optimize them. I'm intending to discuss Java memory tuning and OutOfMemory issues in a future article. Stay tuned

HAPPY LEARNING!

How to determine if I/O is the real issue from AWR report?

DB FILE SEQUENTIAL READ

=======================

'Wait Time` = 493 x 100% / 83.2% = 592.54 (s)

'Service Time' = 11023 (s)

'Response Time' = 11023 + 592.54 = 11615.54 (s)

If we now calculate percentages for all the 'Response Time' components:

CPU time = 94.8 %

control file sequential read = 0.16 %

control file parallel write = 0.1 %

db file parallel write = 0.07 %

Summary:

It is now obvious that the I/O-related Wait Events are not really a significant component of the overall Response Time and that subsequent tuning should be directed to the Service Time component i.e. CPU consumption.

HAPPY LEARNING!

R12- New File System Architecture

Base Directory: The directory where the Oracle Applications is installed. This is a generic directory.

I will suppose that the Base Directory is /APPS

$ORACLE_HOME (for the database tier): /APPS/db/tech_st/10.2.0

$APPL_TOP: /APPS/apps/apps_st/appl

$COMMON_TOP: /APPS/apps/apps_st/comn

$ORACLE_HOME (for the apps tier): /APPS/apps/tect_st/10.1.2

$IAS_ORACLE_HOME (for the apps tier): /APPS/apps/tect_st/10.1.3

$INST_TOP: /APPS/inst/apps/

HAPPY LEARNING!

Installing Oracle Apps 11i

Introduction

This post is about installing Oracle Apps 11i using rapid install. This is a very brief discription of the install document provided by Oracle. For details, you can always refer to the official docs of Oracle. I am providing the installation sequence for one of my test instance. I hope this will serve as a fast and simple docs for you to quickly understand the installation and the steps.

Basic installation of Oracle Applications 11i is divided into 3 parts.

1. Pre-installation

2. Installation

3. Post-installation.

Pre-Installation

i) Checking system requirement

In pre-installation we check about

1) Software Requirement

2) CPU Requirement

3) Memory Requirement

4) Disk Space Requirement

required for installing Oracle Applications.

ii) Creating staging area

We then create a staging area where we download and extract all the required files. The staging area after extracting the software will look as shown below.

[root@ocvmrh2122 11i10_CU2_115102]# ls -rlt

total 24

drwxr-xr-x 5 root root 4096 Oct 13 2005 oraDB

drwxr-xr-x 26 root root 4096 Oct 13 2005 oraAppDB

drwxr-xr-x 6 root root 4096 Oct 13 2005 oraiAS

drwxr-xr-x 10 root root 4096 Oct 13 2005 oraApps

drwxr-xr-x 9 root root 4096 May 7 2007 startCD

You need around 24G for staging area after extraction.

iii) Creating User Accounts

Before we start installation we need to create 2 users. One user (APPLMGR) will be the owner of middle tier and other user (ORACLE) will be the owner of database. Assign the primary owner as “oinstall” and secondary owner as “dba” for both the users.

Check the display setting before starting the installation. You can set the DISPLAY to hostname:0.0

Installation

We begin installation by running rapidwiz present in startCD/Disk1/rapidwiz/ directory. Below are the screen shots that you will see. I have given the screen shots with my input for your easy referrence so that you can go through the same fast.

You can either make a new installation of upgrade an existing installation. In our case we are going to do a new installation. In case of Express Configuration you supply a few basic parameters, such as database type and name, top-level install directory, and increments for port settings. The remaining directories and mount points are supplied by Rapid Install using default values.

Select the user and base directory for the application side installation. Once you set the base install directory, all other directories will be set automatically. You can also edit the individual directories like APPL_TOP, COMMON_TOP etc. as per your requrement.

Metalink Note ID: 316365.1

HAPPY LEARNING!

This post is about installing Oracle Apps 11i using rapid install. This is a very brief discription of the install document provided by Oracle. For details, you can always refer to the official docs of Oracle. I am providing the installation sequence for one of my test instance. I hope this will serve as a fast and simple docs for you to quickly understand the installation and the steps.

Basic installation of Oracle Applications 11i is divided into 3 parts.

1. Pre-installation

2. Installation

3. Post-installation.

Pre-Installation

i) Checking system requirement

In pre-installation we check about

1) Software Requirement

2) CPU Requirement

3) Memory Requirement

4) Disk Space Requirement

required for installing Oracle Applications.

ii) Creating staging area

We then create a staging area where we download and extract all the required files. The staging area after extracting the software will look as shown below.

[root@ocvmrh2122 11i10_CU2_115102]# ls -rlt

total 24

drwxr-xr-x 5 root root 4096 Oct 13 2005 oraDB

drwxr-xr-x 26 root root 4096 Oct 13 2005 oraAppDB

drwxr-xr-x 6 root root 4096 Oct 13 2005 oraiAS

drwxr-xr-x 10 root root 4096 Oct 13 2005 oraApps

drwxr-xr-x 9 root root 4096 May 7 2007 startCD

You need around 24G for staging area after extraction.

iii) Creating User Accounts

Before we start installation we need to create 2 users. One user (APPLMGR) will be the owner of middle tier and other user (ORACLE) will be the owner of database. Assign the primary owner as “oinstall” and secondary owner as “dba” for both the users.

Check the display setting before starting the installation. You can set the DISPLAY to hostname:0.0

Installation

We begin installation by running rapidwiz present in startCD/Disk1/rapidwiz/ directory. Below are the screen shots that you will see. I have given the screen shots with my input for your easy referrence so that you can go through the same fast.

Welcome screen lists the database version and the technology stack components that are installed with the E-Business Suite. Click on next.

You can either make a new installation of upgrade an existing installation. In our case we are going to do a new installation. In case of Express Configuration you supply a few basic parameters, such as database type and name, top-level install directory, and increments for port settings. The remaining directories and mount points are supplied by Rapid Install using default values.

If you have previous installation saved configuration file you can give that as input. If you answer No, Rapid Install saves the configuration parameters you enter on the wizard screens in a new configuration file (config.txt) that it will use to configure your system for the new installation.

You can either select a single node installation of a multi-node installation. In our case we are going for a single node installation.

Select database type. We can either have a Vision demo database or a production database. Production database won’t have any data. Vision demo database will have test data present for our testing. If you are using Vision demo database then your database will need around 130G-140G of space. Else in case of production database space required would be 45G.

Set up the Oracle user and base install directory. Once you set the base install directory, all other directories will be set automatically. You can also edit the individual directories like ORACLE_HOME or db file location as per your requirement.

Select the type of licensing you got from Oracle. Completing a licensing screen does not constitute a license agreement. It simply registers your products as active.

If you select E-Business Suite price bundle then you will see this screen with some of the checkbox grayed. The products that are checked and grayed are licensed automatically as a part of the suite. The ones that are not must be registered separately as additional products — they are not part of the E-Business Suite price bundle. Place a check mark next to any additional products you have licensed and want to register.

Some systems require the country-specific functionality of a localized Applications product. For example, if your company operates in Canada, products such as Human Resources require additional features to accommodate the Canadian labor laws and codes that differ from those in the United States. In such situation, select the proper country. In my case there is no country specific functionality.

Select additional language. By default US will be selected. If you want to install any more language, then you can always select from the available list.

The Select Internationalization Settings screen derives information from the languages you entered on the Select Additional Languages screen. You use it to further define NLS configuration parameters.

Provide the domain name and the port ranges. Give the ports that are not used before as per your knowledge. Anyway the installer will check for port conflicts before it installs the application. You can even change the individual port setting as well.

You have now completed all the information Rapid Install needs to set up and install a single-node system. The Save Instance-specific Configuration screen asks you to save the values you have entered in the wizard in a configuration file.

Review pre-install checks. This will check whether all the requirements are met or not.

Once all the requirements are met, please proceed further to install the application.

Before installation it will give the summary of the techstack its going to install. Click on next.

You can see the progress of installation.

Once all the installation is done, it will show the components installed and its status.

Post-Installation

You can check for the post install steps from the metalink note ID 316365.1 as applicable.

Reference:

Oracle Apps 11i – Install Docs

Metalink Note ID: 316365.1

HAPPY LEARNING!

Steps in Applying a Patch - Oracle Apps 11i

a) login as applmgr and set the environment. For the Windows environment also, you have to test that CLASSPATH contains %JAVA_TOP%, %JAVA_TOP%\loadjava.zip

b) create a PATCH_TOP directory in the Base Directory (at the same level as APPL_TOP, COMMON_TOP, etc: this is just a recommandation) for the patches which will be downloaded. If this directory exists, this step can be skipped. An OS environment variable could be created for this directory. This will be done only one time, when the first patch will be applied.

c) download the patch you want to apply in PATCH_TOP directory and unzip the patch.

d) understand the README.txt file and complete the prerequisite or manual steps. Here, if there are any patched to apply as pre-requisite, in general, is created a document with all the steps involving in the patching process and the pre-requisite patches will be applied before the initial patch.

e) assure that the PLATFORM variable environment (under UNIX, Linux, Solaris) is set

f) Shut down APPS services. The database services and the listener must be up and running.

g) Enable Maintenance Mode.

h) Start AutoPatch in interactive mode. this task must be done from the directory where the patch driver is/was unzipped. Also, respond to the adpatch prompts. If there are more drivers to apply (there is no unified drive: there could be a database (d), copy (c) or generate (g) drive) restart the adpatch and apply the other patches.

i) Review the log files. By default, the location is $APPL_TOP/admin//log and the file is adpatch.log.

j) Review the customizations (if any). If a customization was modified by this patch, the customization must be applied again.

For the customizations please look into the $APPL_TOP/admin/applcust.txt file.

k) Disable Maintenance Mode

l) Restart APPS services

m) Archive or delete the AutoPatch backup files.

2. How could I test the impact of the patch on the APPS environment ?

AutoPatch must be run in test mode (apply=no). The APPS services must be stopped and the Maintenance Mode must be enabled as well. To see which is the impact on the system, you can use Patch Impact Analysis in the Patch Wizard.

3. May I apply a patch if the APPS services are running and the Maintenance Mode is not enabled ?

If this is possible the README.txt will let you know. If the patch README.txt file will not state this explicitly, that means you have to stop the APPS processes and to enable the Maintenance Mode. The help files can always be applied without stopping the APPS services.

If a patch can be applied without stopping the APPS services we have to use the option hotpatch.

4. What is a non-standard patch ?

A non-standard patch is a regular patch (with a similar structure as a standard patch), but the naming is not standard (the naming of the driver file).

A standard patch is named u.drv, c.drv, d.drv or g.drv. The has 6-8 digits.

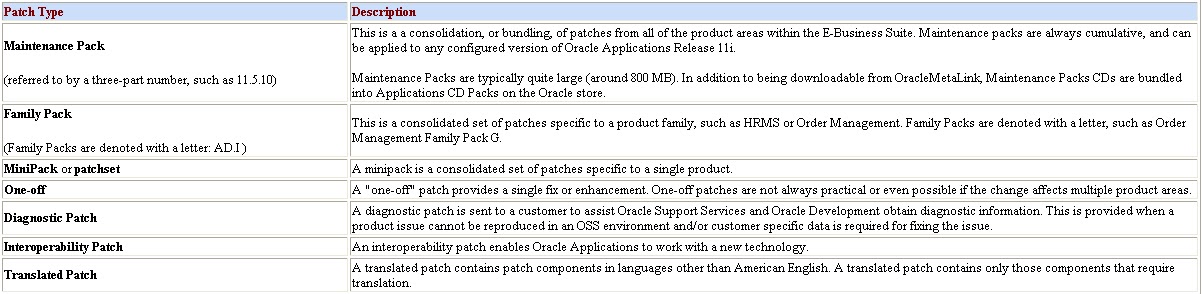

5. Which are the Oracle Applications Patch types ?

HAPPY LEARNING!

b) create a PATCH_TOP directory in the Base Directory (at the same level as APPL_TOP, COMMON_TOP, etc: this is just a recommandation) for the patches which will be downloaded. If this directory exists, this step can be skipped. An OS environment variable could be created for this directory. This will be done only one time, when the first patch will be applied.

c) download the patch you want to apply in PATCH_TOP directory and unzip the patch.

d) understand the README.txt file and complete the prerequisite or manual steps. Here, if there are any patched to apply as pre-requisite, in general, is created a document with all the steps involving in the patching process and the pre-requisite patches will be applied before the initial patch.

e) assure that the PLATFORM variable environment (under UNIX, Linux, Solaris) is set

f) Shut down APPS services. The database services and the listener must be up and running.

g) Enable Maintenance Mode.

h) Start AutoPatch in interactive mode. this task must be done from the directory where the patch driver is/was unzipped. Also, respond to the adpatch prompts. If there are more drivers to apply (there is no unified drive: there could be a database (d), copy (c) or generate (g) drive) restart the adpatch and apply the other patches.

i) Review the log files. By default, the location is $APPL_TOP/admin/

j) Review the customizations (if any). If a customization was modified by this patch, the customization must be applied again.

For the customizations please look into the $APPL_TOP/admin/applcust.txt file.

k) Disable Maintenance Mode

l) Restart APPS services

m) Archive or delete the AutoPatch backup files.

2. How could I test the impact of the patch on the APPS environment ?

AutoPatch must be run in test mode (apply=no). The APPS services must be stopped and the Maintenance Mode must be enabled as well. To see which is the impact on the system, you can use Patch Impact Analysis in the Patch Wizard.

3. May I apply a patch if the APPS services are running and the Maintenance Mode is not enabled ?

If this is possible the README.txt will let you know. If the patch README.txt file will not state this explicitly, that means you have to stop the APPS processes and to enable the Maintenance Mode. The help files can always be applied without stopping the APPS services.

If a patch can be applied without stopping the APPS services we have to use the option hotpatch.

4. What is a non-standard patch ?

A non-standard patch is a regular patch (with a similar structure as a standard patch), but the naming is not standard (the naming of the driver file).

A standard patch is named u

5. Which are the Oracle Applications Patch types ?

HAPPY LEARNING!

Oracle Advanced Replication - Setup

Steps for Setting Up Advanced Replication

Step 1 - Create 2 users: REPADMIN and REPSYS.

REPADMIN will perform all administration tasks related with advanced replication. REPSYS performs operations on SYS's behalf, as required by the advanced replication packages. SYSTEM will hold the replication tables. Set up the db links and grant the users the appropriate privileges.

At the master definition site:

REM Run this first on the main database as SYS.

REM Function: sets up the advanced replication users.

REM

SET echo on

SPOOL cr_rep1.out

CONNECT sys/&sys_passwd

ALTER database rename global_name to NEMP.WORLD;

CREATE USER repsys identified by &repsys_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT connect,resource to repsys;

DROP public database link PRODREP.world;

CREATE PUBLIC database link PRODREP.WORLD using 'PRODREP.WORLD';

DROP database link PRODREP.WORLD;

CREATE DATABASE link PRODREP.WORLD connect to repsys identified by &repsys_passwd;

CREATE USER repadmin identified by &repadmin_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT dba to repadmin;

GRANT execute on dbms_defer to repadmin with grant option;

GRANT execute on dbms_defer_query to repadmin;

EXECUTE dbms_repcat_admin.grant_admin_any_repgroup('repadmin');

EXECUTE dbms_repcat_auth.grant_surrogate_repcat('repsys');

CONNECT repadmin/&repadmin_passwd

DROP database link PRODREP.world;

CREATE DATABASE link PRODREP.WORLD connect to repadmin

identified by &repadmin_passwd;

GRANT execute on sys.dbms_defer to ACME;

CONNECT ACME/&ACME_passwd

DROP database link PRODREP.world;

CREATE DATABASE link PRODREP.WORLD connect to ACME

identified by &ACME_passwd;

SPOOL off;

Step 2 - Create the replication administration users at the master site, with the appropriate database links and database privileges.

REM To be run on the master site as SYS

REM Sites up the replication admin users and objects

REM

SET echo on

SPOOL cr_rep1.out

CONNECT sys/&sys_passwd

ALTER database rename global_name to PRODREP.WORLD;

CREATE USER repsys identified by &repsys_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT connect,resource to repsys;

DROP public database link NEMP.WORLD;

CREATE PUBLIC database link NEMP.WORLD using 'NEMP';

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to repsys

identified by &repsys_passwd;

CREATE USER repadmin identified by &repadmin_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools

/

GRANT dba to repadmin;

GRANT execute on dbms_defer to repadmin with grant option;

GRANT execute on dbms_defer_query to repadmin;

EXECUTE dbms_repcat_admin.grant_admin_any_repgroup('repadmin');

EXECUTE dbms_repcat_auth.grant_surrogate_repcat('repsys');

CONNECT repadmin/&repadmin_passwd

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to repadmin

identified by &repadmin_passwd;

GRANT execute on sys.dbms_defer to ACME;

CONNECT ACME/&ACME_passwd

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to ACME

identified by &ACME_passwd;

SPOOL off;

SET echo off

From here on, you must perform all tasks as the REPADMIN user!

Step 3 - At the master site, pre-create the objects to be replicated.

If the table is constantly changing at the master definition site, it is better to create just the structures and have the replication manager copy the rows while creating the replication objects.

Step 4 - At the master definition site, create a replication schema.

exec dbms_repcat.create_master_schema('ACME');

Step 5 - At the master definition site, create the required replication group.

exec dbms_repcat.create_master_repgroup('BILLING_RUN');

Step 6 - Add the objects to the group. For example:

exec dbms_repcat.create_master_repobject(-

SNAME=>'ACME',ONAME=>'BILLINGSMELTERREDUCTION',TYPE=>'TABLE',-

USE_EXISTING_OBJECT=>true,COPY_ROWS=>true,GNAME=>'BILLING_RUN');

Step 7 - Generate replication support for the object.

This will create the packages and triggers for advanced replication.

exec dbms_repcat.generate_replication_support('BILLING_RUN', 'BILLINGSMELTERREDUCTION','TABLE');

Step 8 - Add one or more master site to the group.

exec dbms_repcat.create_master_database(-

SNAME => 'ACME',oname => 'BILLINGSMELTERREDUCTION',type=>'TABLE',-

USE_EXISTING_OBJECT=>true,copy_rows => true,gname => 'BILLING_RUN');

At this point, there should be a jobs submitted automatically to the master site and master definition site:

JOB WHAT

---- ------------------------------------------------------------

INTERVAL LAST_DATE LAST_SEC BR NEXT_DATE NEXT_SEC

-------------------- --------- -------- -- --------- --------

41 dbms_repcat.do_deferred_repcat_admin('"ACME"', FALSE);

SYSDATE + (5/1440) 08-JUL-98 18:03:19 N 08-JUL-98 18:13:19

This job will execute the tasks listed in the view DBA_REPCATLOG. For example, add replication object, add master site, etc.

At the master definition site, the job to replicate all SQL command should also be automatically submitted. For example:

JOB WHAT

---- ------------------------------------------------------------

INTERVAL LAST_DATE LAST_SEC BR NEXT_DATE NEXT_SEC

-------------------- --------- -------- -- --------- --------

27 sys.dbms_defer_sys.execute(destination=>'PRODREP.WORLD', execute_as_user=>TRUE,

batch_size=>140);

sysdate+5/1440 08-JUL-98 18:01:12 N 08-JUL-98 18:06:12

Step 9 - Resume master activity.

Once DBA_REPCATLOG is cleared, ie, job 41 is completed successfully, you can resume master activity. Errors in executing job 41 will be recorded in DBA_REPCATLOG. To resume master activity, issue the command listed below.

Exec dbms_repcat.resume_master_activity('BILLING_RUN');

From this point the replication group should change from QUIESCED to NORMAL mode. While the database is in QUIESCED mode, only SELECT statements are allowed against the database. In NORMAL mode, all DMLs are allowed.

HAPPY LEARNING!

Step 1 - Create 2 users: REPADMIN and REPSYS.

REPADMIN will perform all administration tasks related with advanced replication. REPSYS performs operations on SYS's behalf, as required by the advanced replication packages. SYSTEM will hold the replication tables. Set up the db links and grant the users the appropriate privileges.

At the master definition site:

REM Run this first on the main database as SYS.

REM Function: sets up the advanced replication users.

REM

SET echo on

SPOOL cr_rep1.out

CONNECT sys/&sys_passwd

ALTER database rename global_name to NEMP.WORLD;

CREATE USER repsys identified by &repsys_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT connect,resource to repsys;

DROP public database link PRODREP.world;

CREATE PUBLIC database link PRODREP.WORLD using 'PRODREP.WORLD';

DROP database link PRODREP.WORLD;

CREATE DATABASE link PRODREP.WORLD connect to repsys identified by &repsys_passwd;

CREATE USER repadmin identified by &repadmin_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT dba to repadmin;

GRANT execute on dbms_defer to repadmin with grant option;

GRANT execute on dbms_defer_query to repadmin;

EXECUTE dbms_repcat_admin.grant_admin_any_repgroup('repadmin');

EXECUTE dbms_repcat_auth.grant_surrogate_repcat('repsys');

CONNECT repadmin/&repadmin_passwd

DROP database link PRODREP.world;

CREATE DATABASE link PRODREP.WORLD connect to repadmin

identified by &repadmin_passwd;

GRANT execute on sys.dbms_defer to ACME;

CONNECT ACME/&ACME_passwd

DROP database link PRODREP.world;

CREATE DATABASE link PRODREP.WORLD connect to ACME

identified by &ACME_passwd;

SPOOL off;

Step 2 - Create the replication administration users at the master site, with the appropriate database links and database privileges.

REM To be run on the master site as SYS

REM Sites up the replication admin users and objects

REM

SET echo on

SPOOL cr_rep1.out

CONNECT sys/&sys_passwd

ALTER database rename global_name to PRODREP.WORLD;

CREATE USER repsys identified by &repsys_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools;

GRANT connect,resource to repsys;

DROP public database link NEMP.WORLD;

CREATE PUBLIC database link NEMP.WORLD using 'NEMP';

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to repsys

identified by &repsys_passwd;

CREATE USER repadmin identified by &repadmin_passwd

default tablespace tools

temporary tablespace temp

quota unlimited on tools

/

GRANT dba to repadmin;

GRANT execute on dbms_defer to repadmin with grant option;

GRANT execute on dbms_defer_query to repadmin;

EXECUTE dbms_repcat_admin.grant_admin_any_repgroup('repadmin');

EXECUTE dbms_repcat_auth.grant_surrogate_repcat('repsys');

CONNECT repadmin/&repadmin_passwd

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to repadmin

identified by &repadmin_passwd;

GRANT execute on sys.dbms_defer to ACME;

CONNECT ACME/&ACME_passwd

DROP database link NEMP.WORLD;

CREATE DATABASE link NEMP.WORLD connect to ACME

identified by &ACME_passwd;

SPOOL off;

SET echo off

From here on, you must perform all tasks as the REPADMIN user!

Step 3 - At the master site, pre-create the objects to be replicated.

If the table is constantly changing at the master definition site, it is better to create just the structures and have the replication manager copy the rows while creating the replication objects.

Step 4 - At the master definition site, create a replication schema.

exec dbms_repcat.create_master_schema('ACME');

Step 5 - At the master definition site, create the required replication group.

exec dbms_repcat.create_master_repgroup('BILLING_RUN');

Step 6 - Add the objects to the group. For example:

exec dbms_repcat.create_master_repobject(-

SNAME=>'ACME',ONAME=>'BILLINGSMELTERREDUCTION',TYPE=>'TABLE',-

USE_EXISTING_OBJECT=>true,COPY_ROWS=>true,GNAME=>'BILLING_RUN');

Step 7 - Generate replication support for the object.

This will create the packages and triggers for advanced replication.

exec dbms_repcat.generate_replication_support('BILLING_RUN', 'BILLINGSMELTERREDUCTION','TABLE');

Step 8 - Add one or more master site to the group.

exec dbms_repcat.create_master_database(-

SNAME => 'ACME',oname => 'BILLINGSMELTERREDUCTION',type=>'TABLE',-

USE_EXISTING_OBJECT=>true,copy_rows => true,gname => 'BILLING_RUN');

At this point, there should be a jobs submitted automatically to the master site and master definition site:

JOB WHAT

---- ------------------------------------------------------------

INTERVAL LAST_DATE LAST_SEC BR NEXT_DATE NEXT_SEC

-------------------- --------- -------- -- --------- --------

41 dbms_repcat.do_deferred_repcat_admin('"ACME"', FALSE);

SYSDATE + (5/1440) 08-JUL-98 18:03:19 N 08-JUL-98 18:13:19

This job will execute the tasks listed in the view DBA_REPCATLOG. For example, add replication object, add master site, etc.

At the master definition site, the job to replicate all SQL command should also be automatically submitted. For example:

JOB WHAT

---- ------------------------------------------------------------

INTERVAL LAST_DATE LAST_SEC BR NEXT_DATE NEXT_SEC

-------------------- --------- -------- -- --------- --------

27 sys.dbms_defer_sys.execute(destination=>'PRODREP.WORLD', execute_as_user=>TRUE,

batch_size=>140);

sysdate+5/1440 08-JUL-98 18:01:12 N 08-JUL-98 18:06:12

Step 9 - Resume master activity.

Once DBA_REPCATLOG is cleared, ie, job 41 is completed successfully, you can resume master activity. Errors in executing job 41 will be recorded in DBA_REPCATLOG. To resume master activity, issue the command listed below.

Exec dbms_repcat.resume_master_activity('BILLING_RUN');

From this point the replication group should change from QUIESCED to NORMAL mode. While the database is in QUIESCED mode, only SELECT statements are allowed against the database. In NORMAL mode, all DMLs are allowed.

HAPPY LEARNING!

GUIDE ON CREATING PHYSICAL STANDBY USING RMAN DUPLICATE

METALINK DOCID: 1075908.1 STEP BY STEP GUIDE ON CREATING PHYSICAL STANDBY USING RMAN DUPLICATE...FROM ACTIVE DATABASE

========================= WITHOUT SHUTTING DOWN THE PRIMARY AND USING PRIMARY ACTIVE DATABASE FILES [ID 1075908.1]

STEP-1 (KEEP THE FOLLOWING DETAILS IN HANDY)

============================================

PRIMARY Database Details

========================

SQL> sho parameter spfile

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

spfile string +DATADG1/spfilepmcpbs21.ora

SQL> sho parameter control_files

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

control_files string +DATADG1/bcrpbs20/controlfile/control01.ctl

SQL> sho parameter db_recovery_file_dest

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

db_recovery_file_dest string +datadg1 <-- This parameter should be defined SQL> select FILE#,NAME from v$datafile;

FILE# NAME

---------- ----------------------------------------------------------------------------------------------------

1 +DATADG1/bcrpbs20/datafile/system.279.699561171

2 +DATADG1/bcrpbs20/datafile/sysaux.280.699561057

3 +DATADG1/bcrpbs20/datafile/undotbs1.276.699561241

..

..

..

73 +DATADG1/bcrpbs20/datafile/pbsd_racs_data.469.721768271

74 +DATADG1/bcrpbs20/datafile/pbsd_price_idx.464.721768335

76 +DATADG1/bcrpbs20/datafile/pbsd_stg_raw_data.450.721859367

77 +DATADG1/bcrpbs20/datafile/pbsd_racs_data.443.721859377

78 +DATADG1/bcrpbs20/datafile/pbsd_price_idx.470.721859383

60 rows selected.

SQL>

SQL> select MEMBER from v$logfile;

MEMBER

--------------------------------------------------------------------------------

+DATADG1/bcrpbs20/onlinelog/group_1.378.713448383

+DATADG1/bcrpbs20/onlinelog/group_1.369.713448389

+DATADG1/bcrpbs20/onlinelog/group_5.265.713446555

..

..

..

+DATADG1/bcrpbs20/onlinelog/group_33.421.713454787

+DATADG1/bcrpbs20/onlinelog/group_33.422.713454793

STANDBY Database Details

========================

SQL> sho parameter spfile

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

spfile string +DATADG1/pmcpbs20/spfilepmcpbs2.ora

SQL> sho parameter control_files

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

control_files string +DATADG1/pmcpbs20/controlfile/control01.ctl

SQL>

SQL> select FILE#,NAME from v$datafile;

FILE# NAME

---------- ----------------------------------------------------------------------------------------------------

1 +DATADG1/pmcpbs20/datafile/system.661.727505763

2 +DATADG1/pmcpbs20/datafile/sysaux.660.727505841

3 +DATADG1/pmcpbs20/datafile/undotbs1.662.727505217

..

..

..

77 +DATADG1/pmcpbs20/datafile/pbsd_racs_data.656.727505911

78 +DATADG1/pmcpbs20/datafile/pbsd_price_index.653.727506103

57 rows selected.

SQL>

STEP-2 TAKE BACKUP OF THE SPFILE OR PFILE OF STANDBY DATABASE

======================================================

(i) If standby is using spfile, create pfile using spfile in temporary location, If standby was using pfile

make a copy of the pfile to the temporary location

(ii) In the pfile created in (i), Make parameter cluster_database=false , If standby is in RAC.

(ii) Shutdown the database(RAC-using srvctl) and start the DB as standalone with the PFILE taken as backup

bash-3.00$ ls -ltr

total 179752

-rw-r--r-- 1 oracle oinstall 3553 Aug 26 00:59 pfile_bkp.ora

bash-3.00$ pwd

/home/oracle/PFILE_CONTROL

bash-3.00$

STEP-3 CREATE AN RMAN .RCV FILE AS BELOW

=========================================

run {

allocate channel prmy1 type disk;

allocate channel prmy2 type disk;

allocate channel prmy3 type disk;

allocate channel prmy4 type disk;

allocate channel prmy5 type disk;

allocate channel prmy6 type disk;

allocate auxiliary channel stby1 type disk;

allocate auxiliary channel stby2 type disk;

allocate auxiliary channel stby3 type disk;

allocate auxiliary channel stby4 type disk;

duplicate target database for standby from active database

spfile

set db_unique_name='PMCPBS20'

set control_files='+DATADG1/pmcpbs20/controlfile/control01.ctl'

set cluster_database='false'

set service_names='PMCPBS20'

set fal_client='pmcpbs20'

set fal_server='bcrpbs20'

set standby_file_management='AUTO'

set log_archive_config='dg_config=(PMCPBS20,BCRPBS20)'

set log_archive_dest_1='location="+ARCHDG1", valid_for=(ALL_LOGFILES,ALL_ROLES)'

nofilenamecheck;

}

Where,

.. set db_unique_name='PMCPBS20' <<-------- This should be the unique name of standby database .. set control_files='+DATADG1/pmcpbs20/controlfile/control01.ctl' <<-------- This was the control file location found in STEP-1 .. set cluster_database='false' <<-------- For RAC this parameter is set FALSE in STEP-2 .. set service_names='PMCPBS20' <<-------- This SERVICE NAME should connect to standby database STEP-4 RUN THE BELOW .RCV IN STANDBY SITE AS SHOWN BELOW ======================================================== nohup rman target USER/PWD@BCRPBS20_forrebuild auxiliary USER/PWD@PMCPBS20 cmdfile /home/oracle/PFILE_CONTROL/Rebuilt_BCRPBS20_26thAug.rcv log /home/oracle/PFILE_CONTROL/Rebuilt_BCRPBS20_26thAug.log & Where, USER/PWD@BCRPBS20_forrebuild <<---- BCRPBS20_forrebuild(This tns entry should be added to TNSNAMES.ORA for connecting to PRIMARY DB to only 1 node) USER/PWD@PMCPBS20 <<---- PMCPBS20 (This tns entry should point to standby database itself) LOG OF THE ABOVE STANDBY CREATION ACTIVITY SHOULD BE AS BELOW ============================================================== Recovery Manager: Release 11.1.0.7.0 - Production on Thu Aug 26 04:22:32 2010 Copyright (c) 1982, 2007, Oracle. All rights reserved. connected to target database: PMCPBS20 (DBID=1148223702) connected to auxiliary database: PMCPBS20 (not mounted) RMAN> run {

2> allocate channel prmy1 type disk;

3> allocate channel prmy2 type disk;

4> allocate channel prmy3 type disk;

5> allocate channel prmy4 type disk;

6> allocate channel prmy5 type disk;

7> allocate channel prmy6 type disk;

8> allocate auxiliary channel stby1 type disk;

9> allocate auxiliary channel stby2 type disk;

10> allocate auxiliary channel stby3 type disk;

11> allocate auxiliary channel stby4 type disk;

12> duplicate target database for standby from active database

13> spfile

14> set db_unique_name='PMCPBS20'

15> set control_files='+DATADG1/pmcpbs20/controlfile/control01.ctl'

16> set cluster_database='false'

17> set service_names='PMCPBS20'

18> set fal_client='pmcpbs20'

19> set fal_server='bcrpbs20'

20> set standby_file_management='AUTO'

21> set log_archive_config='dg_config=(PMCPBS20,BCRPBS20)'

22> set log_archive_dest_1='location="+ARCHDG1", valid_for=(ALL_LOGFILES,ALL_ROLES)'

23> nofilenamecheck;

24> }

25>

26>

using target database control file instead of recovery catalog

allocated channel: prmy1

channel prmy1: SID=1354 instance=PMCPBS21 device type=DISK

allocated channel: prmy2

channel prmy2: SID=1353 instance=PMCPBS21 device type=DISK

allocated channel: prmy3

channel prmy3: SID=1341 instance=PMCPBS21 device type=DISK

allocated channel: prmy4

channel prmy4: SID=1349 instance=PMCPBS21 device type=DISK

allocated channel: prmy5

channel prmy5: SID=1325 instance=PMCPBS21 device type=DISK

allocated channel: prmy6

channel prmy6: SID=1357 instance=PMCPBS21 device type=DISK

allocated channel: stby1

channel stby1: SID=2066 device type=DISK

allocated channel: stby2

channel stby2: SID=2065 device type=DISK

allocated channel: stby3

channel stby3: SID=2064 device type=DISK

allocated channel: stby4

channel stby4: SID=2753 device type=DISK

Starting Duplicate Db at 26-AUG-10

contents of Memory Script:

{

backup as copy reuse

file '/home/oracle/PMCPBS20/product/dbms/11G/dbs/orapwPMCPBS21' auxiliary format

'/home/oracle/PMCPBS20/product/dbms/11G/dbs/orapwPMCPBS21' file

'+DATADG1/spfilepmcpbs21.ora' auxiliary format

'+DATADG1/pmcpbs20/spfilepmcpbs2.ora' ;

sql clone "alter system set spfile= ''+DATADG1/pmcpbs20/spfilepmcpbs2.ora''";

}

executing Memory Script

Starting backup at 26-AUG-10

Finished backup at 26-AUG-10

sql statement: alter system set spfile= ''+DATADG1/pmcpbs20/spfilepmcpbs2.ora''

contents of Memory Script:

{

sql clone "alter system set db_unique_name =

''PMCPBS20'' comment=

'''' scope=spfile";

sql clone "alter system set control_files =

''+DATADG1/pmcpbs20/controlfile/control01.ctl'' comment=

'''' scope=spfile";

sql clone "alter system set cluster_database =

false comment=

'''' scope=spfile";

sql clone "alter system set service_names =

''PMCPBS20'' comment=

'''' scope=spfile";

sql clone "alter system set fal_client =

''pmcpbs20'' comment=

'''' scope=spfile";

sql clone "alter system set fal_server =

''bcrpbs20'' comment=

'''' scope=spfile";

sql clone "alter system set standby_file_management =

''AUTO'' comment=

'''' scope=spfile";

sql clone "alter system set log_archive_config =

''dg_config=(PMCPBS20,BCRPBS20)'' comment=

'''' scope=spfile";

sql clone "alter system set log_archive_dest_1 =

''location=^"+ARCHDG1^", valid_for=(ALL_LOGFILES,ALL_ROLES)'' comment=

'''' scope=spfile";

shutdown clone immediate;

startup clone nomount ;

}

executing Memory Script

sql statement: alter system set db_unique_name = ''PMCPBS20'' comment= '''' scope=spfile